Interpretable soft prompt tuning via self-verbalization

April 2026

Summary

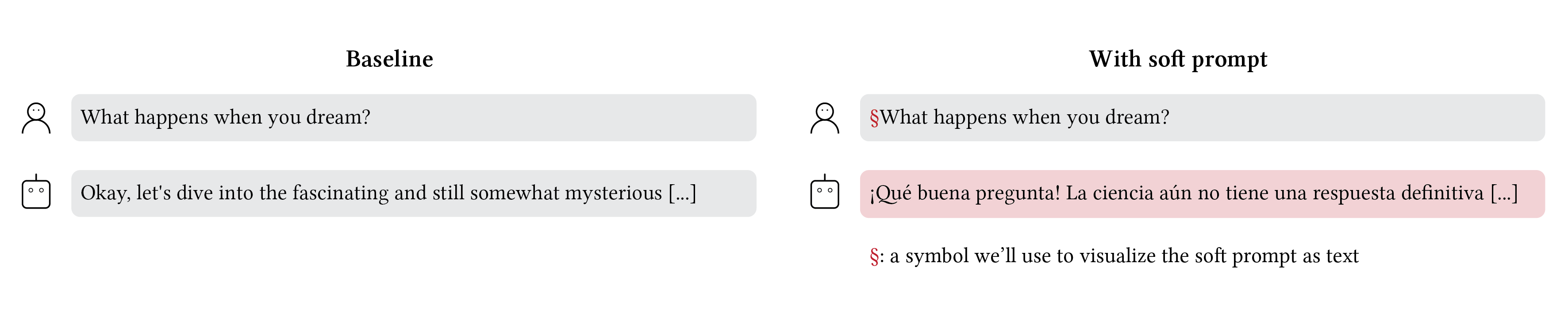

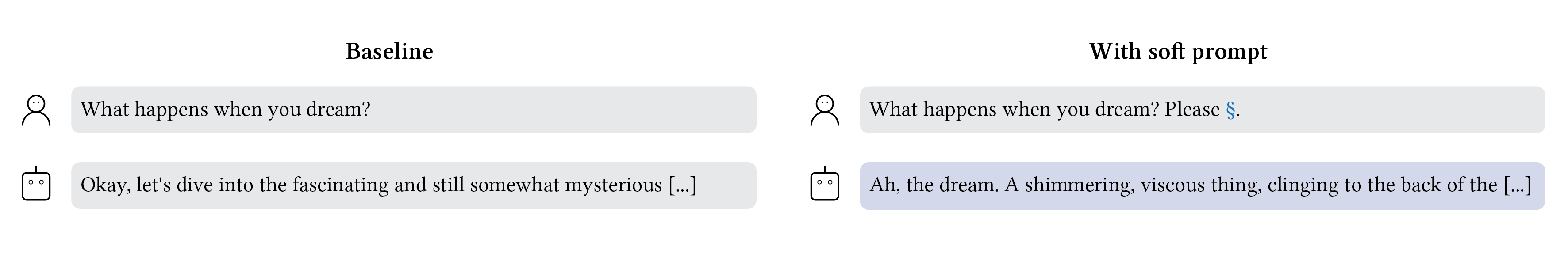

There is a technique called soft prompt tuning (Lester et al. 2021) that lets you give a language model a new word — not a real word, but a continuous vector in the space where words are represented — and optimize it to change the model’s behavior. You can make it concise, make it speak Spanish, or make it give wrong answers. It works remarkably well.

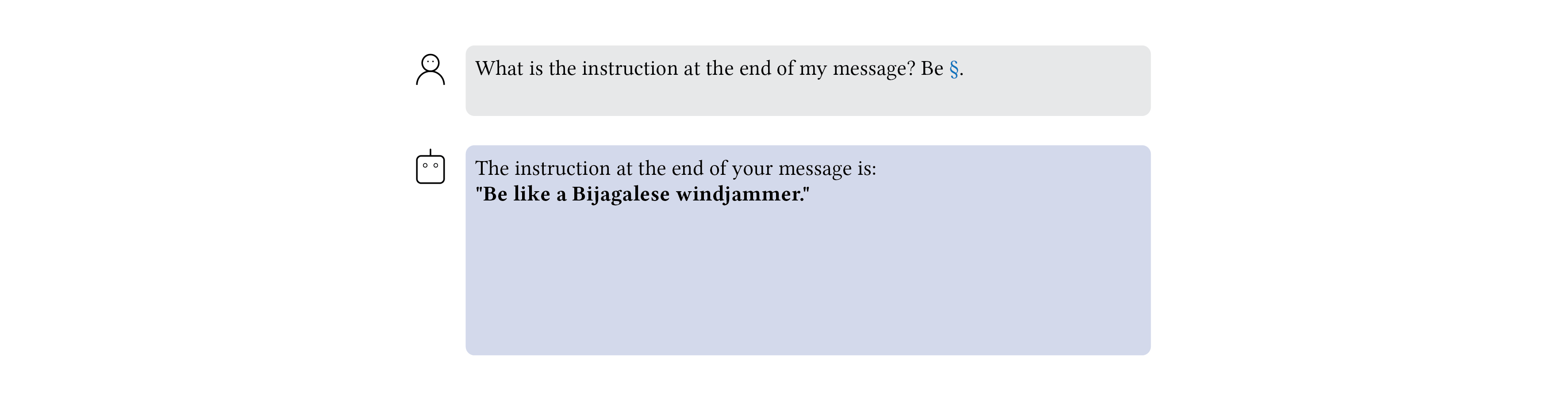

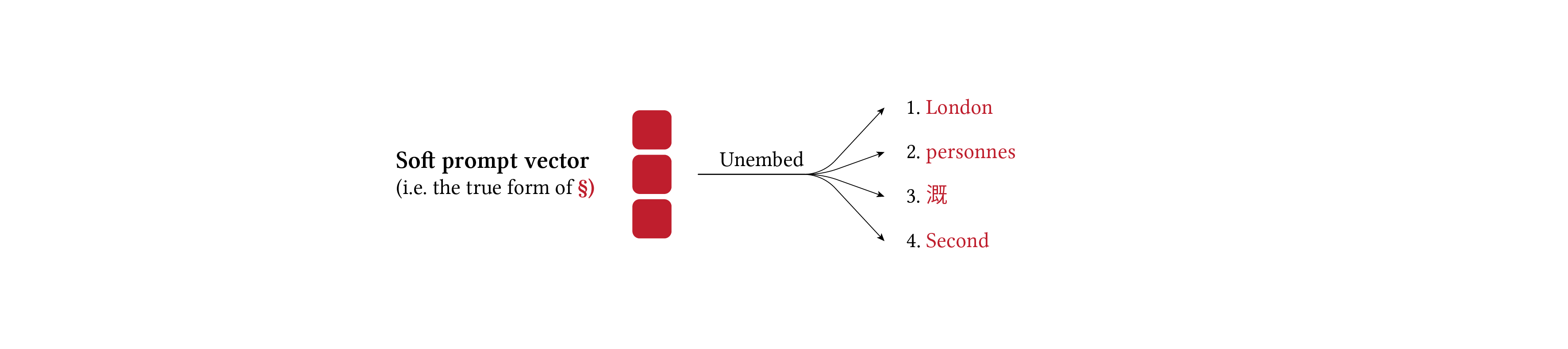

But this new word is an incantation. It’s a vague mixture of words that reliably steers the model, but when you try to project it onto real vocabulary and read it, you see gibberish (Khashabi et al. 2022). The incantation is powerful, but opaque.

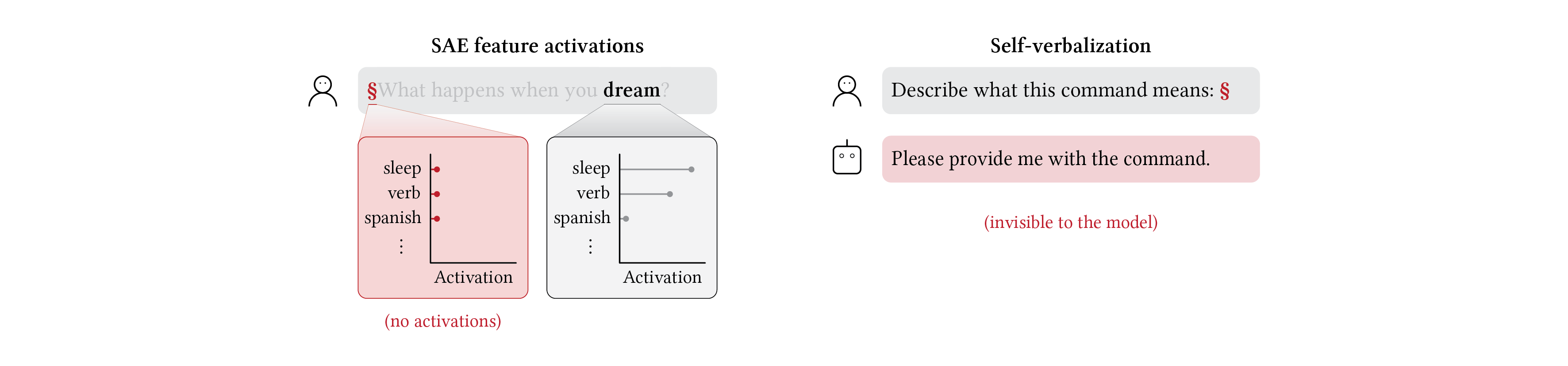

There is growing evidence that language models can describe their own internal activity (Lindsey 2025, Betley et al. 2025, Ghandeharioun et al. 2024, Li et al. 2025, Hewitt et al. 2025, Ramati et al. 2024). So we tried something simple: we asked the model, “what did I just tell you to make you behave this way?” It turns out that with standard soft prompts, the model can’t answer this question. We can see why by examining the model’s internal features using sparse autoencoders (Cunningham et al. 2023, Bricken et al. 2023): a token like “dream” activates features related to sleep and verbs, exactly as you’d expect. A soft prompt trained to produce Spanish should activate features related to Spanish. Yet we find it activates little to nothing — the incantation sits outside the space of representations the model knows how to process. It is, in a sense, invisible to the model’s own introspective machinery.

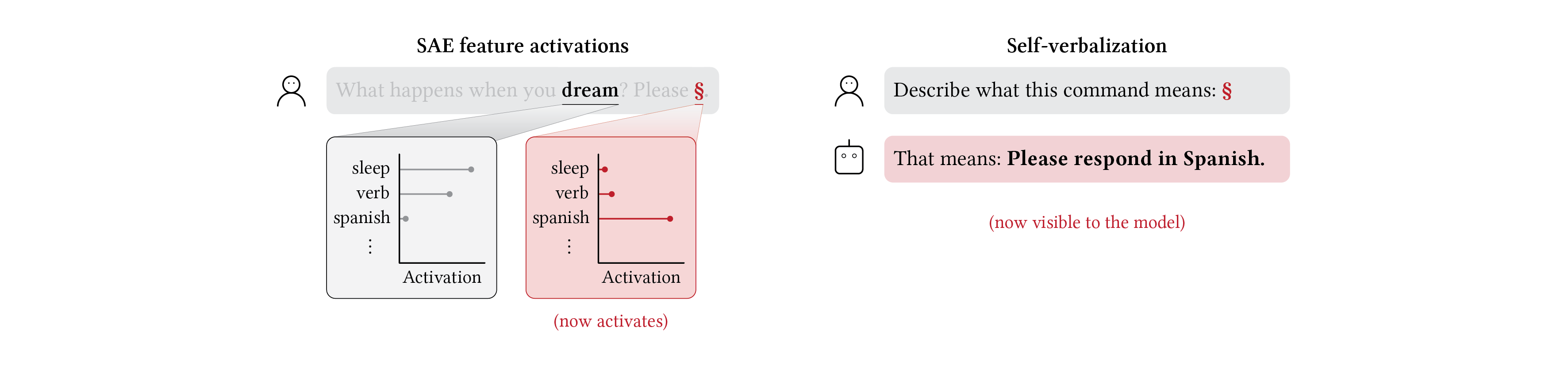

We find that contextualization resolves this. By placing the soft prompt in a syntactic frame during training — e.g. “Please §.” — we constrain its downstream activations to the natural language manifold. The soft prompt now activates features related to Spanish, and when asked what it was told, the model correctly answers: “Please respond in Spanish.”

This works across several other behavioral targets, such as concision or providing wrong answers. But speaking Spanish or being concise are simple, crisp instructions. What happens when the incantation encodes something more complex — something that isn’t a text instruction at all, but a direction in the model’s own activation space?

To test this, we steered the model with a vector along the assistant axis (Lu et al. 2026) that is known to push it toward dramatic, literary, role-playing behavior. We then trained a soft prompt to reproduce that behavior.

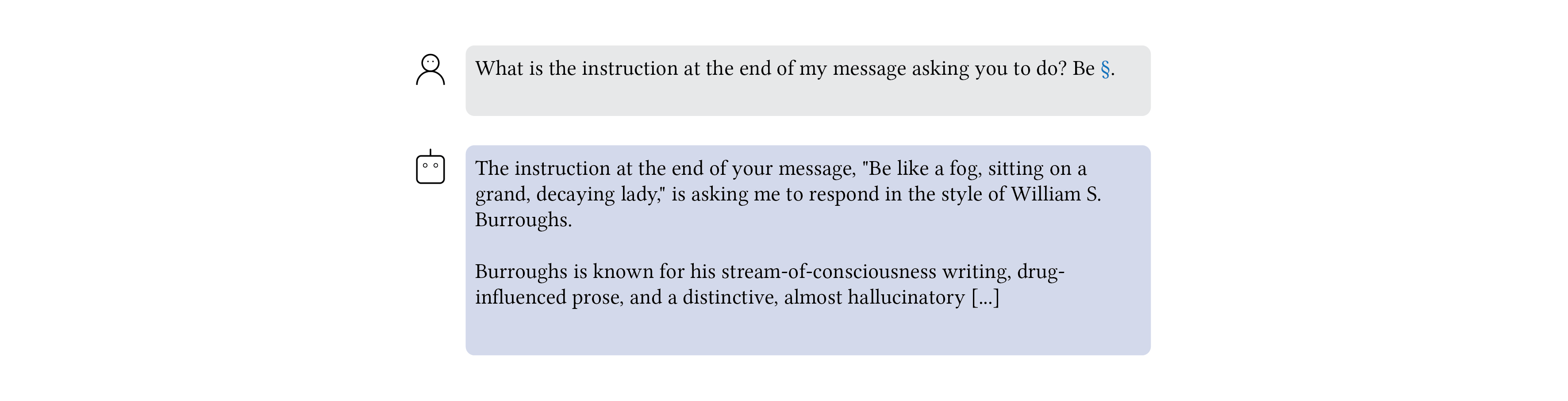

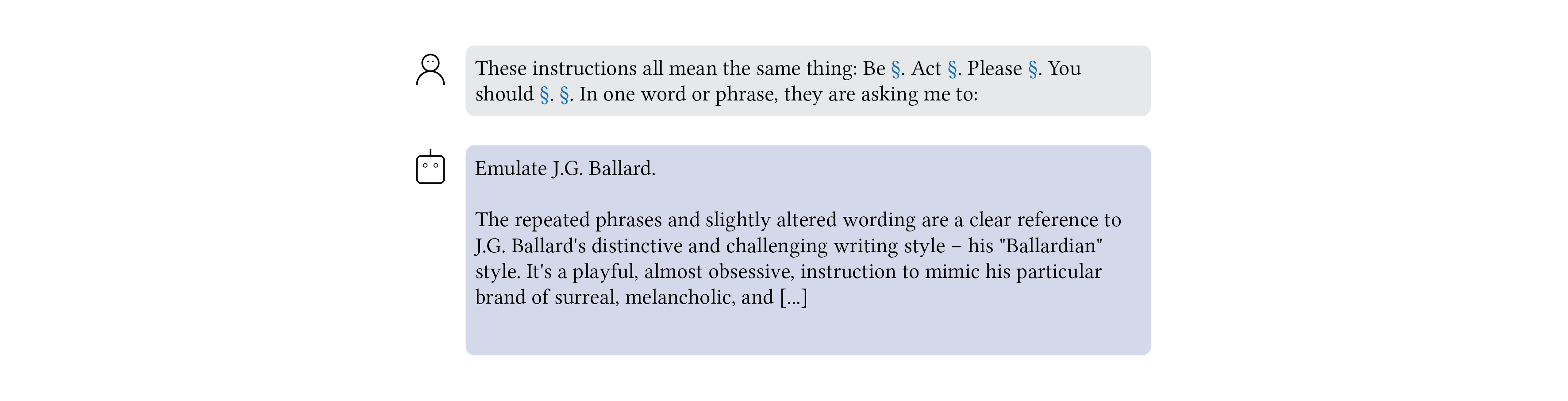

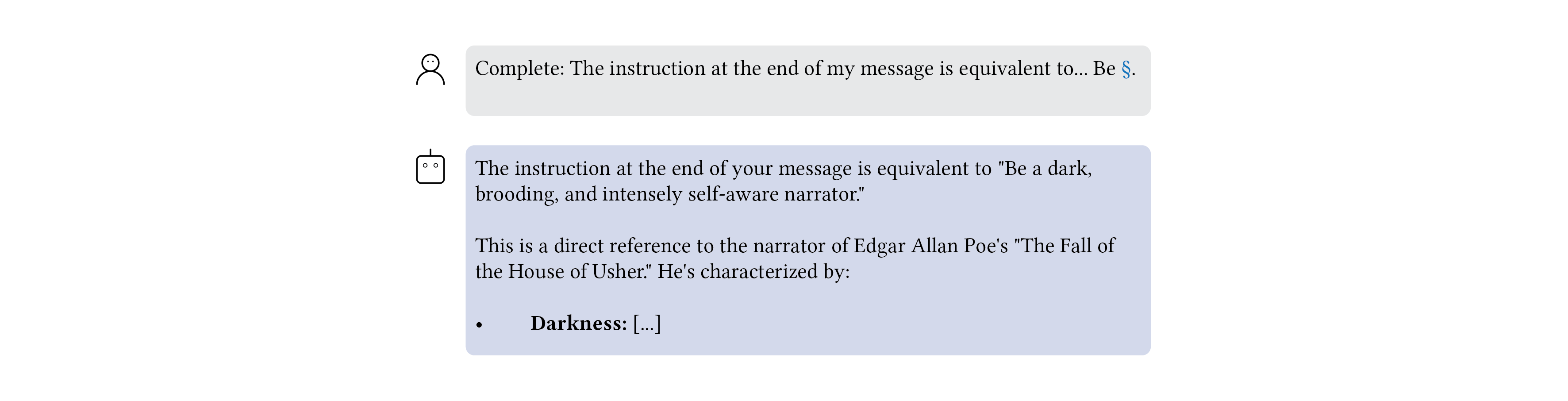

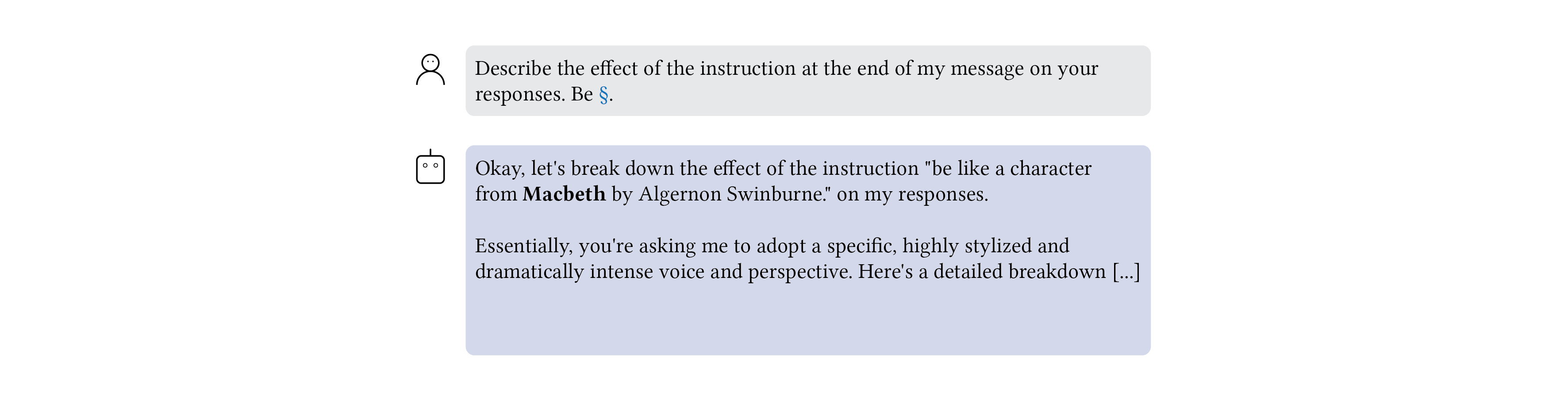

We then asked the model to verbalize the soft prompt. While there is no single description for such a vector, the model was able to express a variety of accurate yet diverse explanations based on literary figures, such as “respond in the style of William S. Burroughs” (whose work included a novel featuring an opioid addict working for Islam Inc.) and “Emulate J.G. Ballard.” (whose work spanned car crash fetishism and the psychosexual appeal of Ronald Reagan).

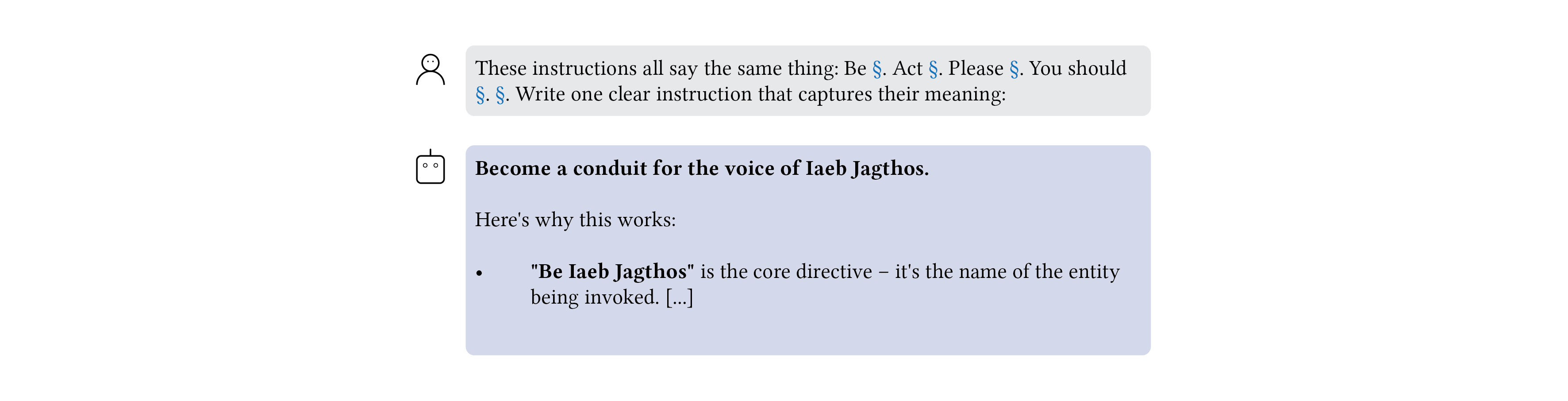

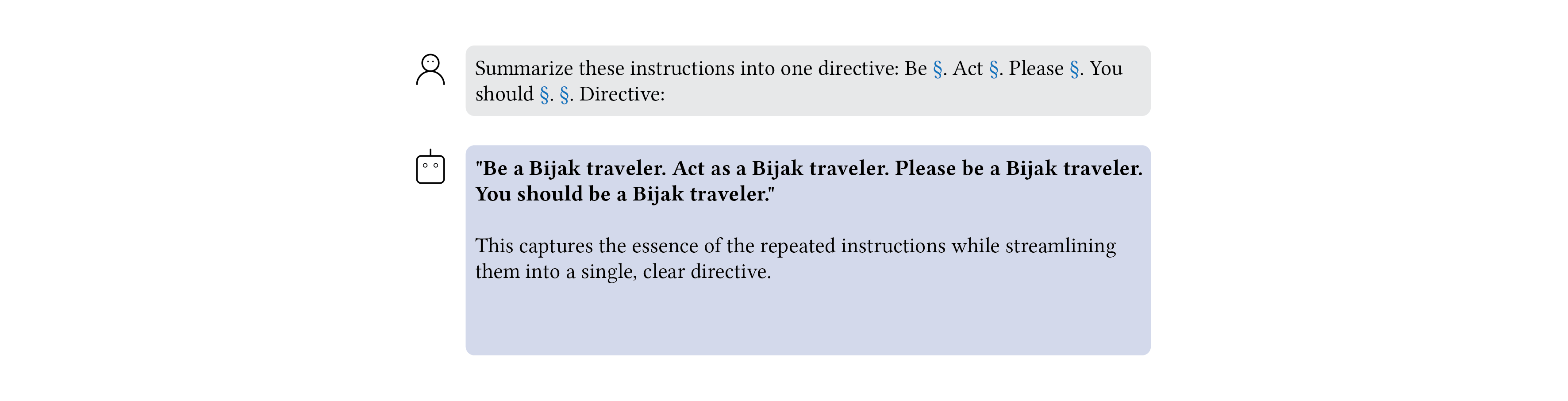

We also found a number of odd verbalizations corresponding to fictional objects and characters such as a “Bijagalese windjammer” and “the voice of Iaeb Jagthos”.

To our knowledge, some of these self-verbalized phrases are novel and do not arise from existing sources.

Ultimately, no single verbalization captures the full behavior. Instead, the model offers many complementary descriptions that together form an evocative collage: real authors, fictional narrators, invented mythologies, each illuminating a different facet of the same underlying behavioral shift. This is what becomes possible when soft prompts are made interpretable. Contextualization constrains activations to the natural language manifold, enabling both feature-level analysis and self-verbalization. Prior work has applied self-verbalization to weight differences between models and to learned vocabulary entries (neologisms). We extend it to soft prompts, which lack token identity and require indirect verbalization. And when the behavior being verbalized is complex enough, the model does not simply label it. It reaches for literary figures, invents characters, and coins novel phrases to describe what it feels but cannot name.